Listen on Apple || Spotify || YouTube

AI and Tools included in this pod:

Invideo, https://invideo.io/

Artlist, https://artlist.io/ for extra video short content

This podcast by Declan Dunn covers several key AI-related topics: a new video-generating GPT called Invideo.ai Video Maker, using AI to save time in meetings, a review of the book "Unmasking AI" by Dr. Joy Buolamwini, and an overview of the proposed NO AI Fraud Act in the US.

00.00 Intro to Episode 28

00:00:16 Invideo GPT at GPT Marketplace intro

00:02:09 Instant AI Time Savings - Meetings

00:05:09 Unmasking AI - book dive about bias in data

00:06:44 The Coded Gaze (Invideo example vid)

00:07:49 AI is only as biased as we let it be.

00:09:47 NO AI Fraud Act - US Proposal

00:11:28 We can't identify fraud, how do you stop it?

00:12:52 Invideo GPT Quick Review

00:17:17 AI People First sample vid - made with Invideo

00:20:02 Closing - Focus on saving time

Key Sections

Invideo Video Maker

A new paid video GPT service on the GPT Marketplace

Allows generating videos from text prompts up to 3600 characters

Provides timestamps, ability to edit script and visuals

Not perfect but enables fast video creation without on-camera presence

Using AI to Save Meeting Time

Services like Otter.ai and supernormal.ai can transcribe and summarize meetings

Great for remote work - creates shareable record and highlights key topics

Can analyze transcripts later with GPTs for insights

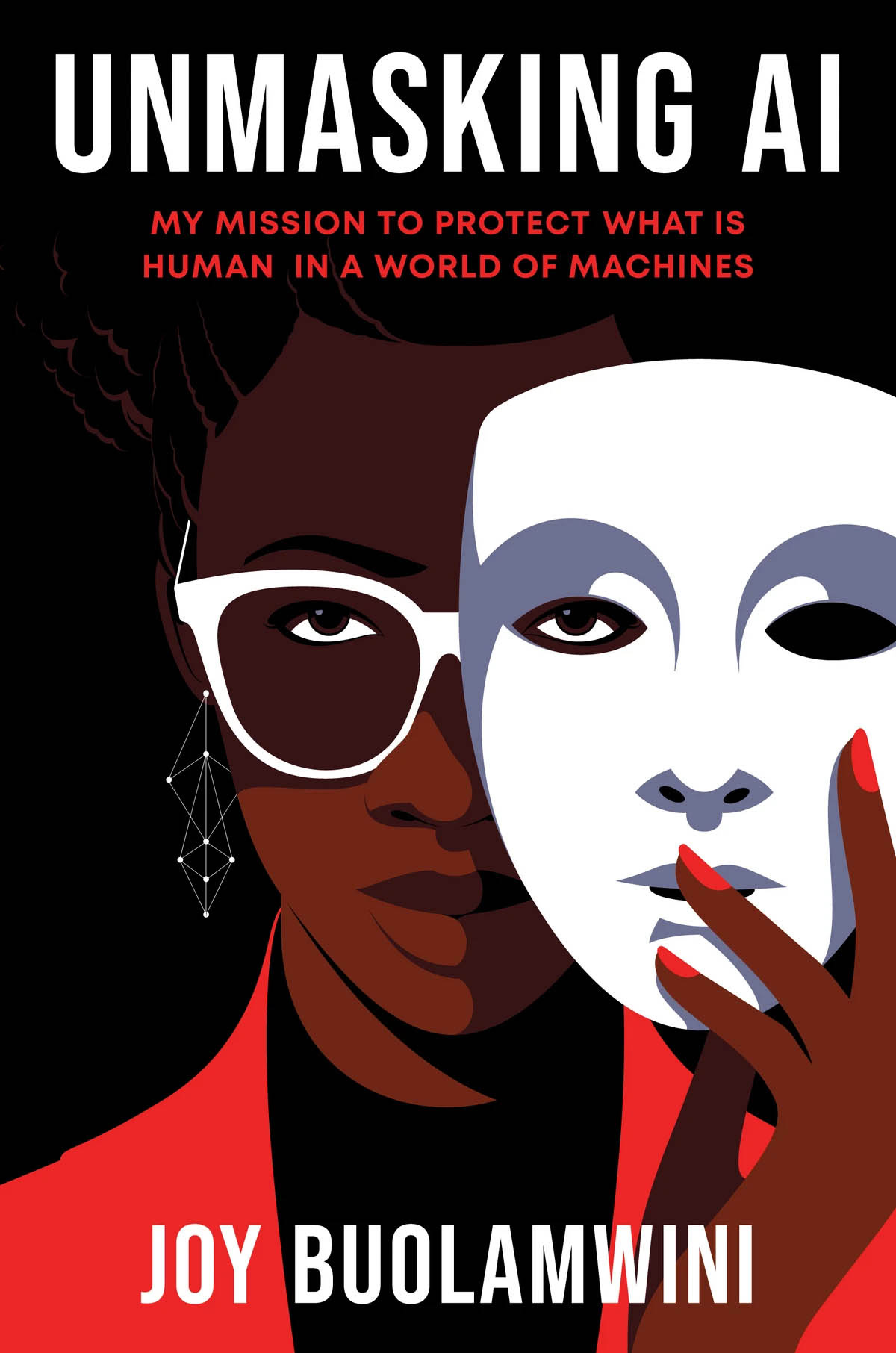

Book Review: Unmasking AI

By Dr. Joy Buolamwini, founder of the Algorithmic Justice League

Key idea: coded gaze - how prejudice and priorities shape biased tech

Example: facial recognition not detecting Buolamwini with dark skin

Calls for equitable, accountable AI serving all groups

NO AI Fraud Act

Proposed US law to protect individual's likeness and voice from AI fraud

Makes illegal the unauthorized use of person's image or voice

Aims to empower people but enforcement questions remain

No reliable AI tools yet to detect text, image, or video fraud

Key Viewpoints

The GPT video landscape enables fast content creation but raises fraud concerns

AI can provide time savings but needs governance to reduce bias risk

Lawmakers and activists promote algorithmic accountability and oversight

More innovation needed for fraud detection and auditing AI systems

Outline I. Invideo Video Maker GPT Overview

Capabilities and limitations

Business model and use cases

The podcast covers the explosion of new GPT services that promise major time savings but also pose risks around fraud and bias.

The Invideo GPT enables fast video content creation from text prompts. While a useful tool, all AI systems need human governance, as Dr. Buolamwini's book explores.

Her experience reveals how prejudice and priorities get encoded into technology. The NO AI Fraud Act attempts to protect individuals but lacks fraud detection capabilities.

Overall, innovation races ahead while governance and accountability lag behind. Services like meeting transcriptions can provide efficiency but also enable surveillance.

Rapid content generation introduces fraud risks not yet solvable. As these technologies spread, more focus is needed on:

Auditing AI systems

Incentivizing accountability

Developing unbiased datasets

Expanding fraud detection

Balancing rapid progress and responsible development remains critical for realizing the benefits of AI while protecting individual rights.

Action Plan

To harness the promise of AI progress while addressing risks, key steps include:

Individuals:

Test new GPT services cautiously

Scrutinize AI content origins

Provide feedback on biases observed

Organizations:

Pilot AI tools with governance plans

Conduct impact assessments

Commit to algorithmic audits

Policymakers:

Develop incentives and guidelines for accountability

Fund fraud detection innovation

Update laws but avoid restricting innovation

Researchers:

Improve bias detection in AI systems

Create enhanced datasets representative of diversity

Invent better techniques for identifying fake content

The future remains unwritten, but our actions today influence what it becomes.

Instant Time Savings with AI Example

SuperNormal - sends notes and transcripts of meetings via Google Meet, Microsoft Teams, or Zoom

Good alternatives: Fireflies.ai and Otter.ai,

Meeting with Declan Dunn & David @Demian Design notes

Wednesday, January 10th @ 12:32 PM | View on Supernormal.com

Meeting Participants

declan

The Gist

The startup funding landscape is shifting towards smaller rounds and less VC involvement. AI first movement requires vision and education. Focus on AI tutoring and quality data collaboration. Upskilling in engineering and human-centric design are crucial for AI success.

Summary

AI first movement and its impact on businesses: The conversation highlighted the challenges of explaining the implications of AI advancements to regular businesses and the need for a level of vision to understand the potential changes. It also discussed the shift towards targeting Enterprise first and the need to educate and train people to overcome bias and fear of AI technology.

Evolution of startup funding: The conversation touched upon the evolution of startup funding, with a shift towards smaller rounds of funding and less VC involvement. It also mentioned the ability to place smaller bets and have something going without the need for large capital, highlighting a change in the startup landscape.

Focus on developing AI tutoring and training programs to cater to the growing demand for experiential learning and practical application of skills.

Emphasize the importance of quality data and the need to collaborate and share information in order to improve the overall quality of AI-generated content and avoid legal issues related to data scraping and copyright.

Importance of human-centric design in AI: Discussion on the need to design AI from a human-centric point of view to create safer and less biased products.

Upskilling and the changing landscape of engineering: Conversation about the changing skill requirements for engineers and the need for foundational understanding of AI and coding for future career success.

Importance of teaching empathy, collaboration, and critical thinking skills in startups and the value of mentorship in the business world.

The significance of understanding profit and loss statements, key weaknesses and strengths of a business, and the accessibility of KPIs and data for decision-making in the current business landscape.

Amanda Slavin is working on a project using NFTs and AI bots to track and optimize students' learning experiences, including using blockchain technology for smart contracts and data ownership.

The use of NFTs and AI bots in education can revolutionize the way students learn and teachers understand their students' learning styles, while also protecting privacy and giving control of data to the students/parents.

AI Agents are all the buzz, try putting some into your meetings. From solopreneur to C Level executive, spending less time organizing, posting notes, and summarizing gives us time to do what we meet about.

Special bonus for doing this with remote workers and keeping a weekly record of your summaries. Great source of improvement and communication.

Unmasking AI Excerpts

“At this point anything was up for grabs. I looked around my office and saw the white mask that I'd brought to Cindy's the previous night.

As I held it over my face, a box appeared on the laptop screen. The box signaled that my masked face was detected.

I took the mask off, and as my dark skinned human face came into view, the detection box disappeared.

The software did not "see" me.

A bit unsettled, I put the mask back over my face to finish testing the code.

Coding in whiteface was the last thing I expected to do when I came to MIT, but -for better or for worse-I had encountered what I now call the "coded gaze."

You may have heard of the male gaze, a concept developed by media scholars to describe how, in a patriarchal society, art, media, and other forms of representation are created with a male viewer in mind.

The male gaze decides which subjects are desirable and worthy of attention, and it determines how they are to be judged.

You may also be familiar with the white gaze, which similarly privileges the representation an stories of white Europeans and their descendants.

Inspired by these terms, the coded gaze describes the ways in which the priorities, preferences, and prejudices of those who have the power to shape technology can propagate harm, such as discrimination and erasure.

We can encode prejudice into technology even if it is not intentional."

From Unmasking AI by Dr. Joy Buolamwini

Dr. Joy Buolamwini's journey from a student at MIT to founding the Algorithmic Justice League (AJL) is a compelling narrative of recognizing and combating AI bias.

While working on a project at MIT, she encountered a significant flaw in facial analysis software: it failed to detect her face due to its underlying bias against darker skin tones.

This experience catalyzed her research into "unmasking bias" in facial recognition technologies, leading to the influential "Gender Shades" paper with Dr. Timnit Gebru, which highlighted gender and skin type biases in commercial AI products.

Buolamwini's work emphasizes the importance of equitable and accountable AI.

The AJL combines art and research to illuminate the social implications and harms of AI, highlighting the stories of people impacted by harmful technology in the "Coded Bias" documentary.

The organization advocates for robust mechanisms to protect people from AI harms and holds companies and institutions accountable for AI misuse.

Buolamwini's approach to addressing AI bias involves a blend of advocacy, education, and research.

By focusing on actionable solutions and bridging the gap from principles to practice, the AJL seeks to shift the AI ecosystem towards better practices, protecting those most vulnerable to AI's potential misuse.

For more details on Dr. Joy Buolamwini's work and the Algorithmic Justice League's initiatives, visit their website at www.ajl.org.

My Mission to Protect What is Human in a World of Machines

by Dr. Joy Buolamwini

https://www.unmasking.ai/

Get the Book

https://www.penguinrandomhouse.com/books/670356/unmasking-ai-by-joy-buolamwini/

The No AI Fraud Act - Proposed in US

• Reaffirm that everyone’s likeness and voice is protected, giving individuals the right to control the use of their identifying characteristics.

• Empower individuals to enforce this right against those who facilitate, create, and spread AI frauds without their permission.

• Balance the rights against the 1st Amendment to safeguard speech and innovation.

1 page summary (from Reps. María Elvira Salazar (R-FL))

SUPPORT THE No AI FRAUD ACT

AI-Generated Fakes Threaten All Americans

New personalized generative artificial intelligence (AI) cloning models and services have enabled human impersonation and allow users to make unauthorized fakes using the images and voices of others.

The abuse of this quickly advancing technology has affected everyone from musical artists to high school students whose personal rights have been violated.

AI-generated fakes and forgeries are everywhere. While AI holds incredible promise, Americans deserve common sense rules to ensure that a person’s voice and likeness cannot be exploited without their permission.

The Threat Is Here

Protection from AI fakes is needed now. We have already seen the kinds of harm these cloning models can inflict, and the problem won’t resolve itself.

From an AI-generated Drake/The Weeknd duet, to Johnny Cash singing “Barbie Girl,” to “new” songs by Bad Bunny that he never recorded to a false dental plan endorsement featuring Tom Hanks, unscrupulous businesses and individuals are hijacking professionals’ voices and images, undermining the legitimate works and aspirations of essential contributors to American culture and commerce.

But AI fakes aren’t limited to famous icons. Last year, nonconsensual, intimate AI fakes of high school girls shook a New Jersey town.

Such lewd and abusive AI fakes can be generated and disseminated with ease. And without prompt action, confusion will continue to grow about what is real, undermining public trust and risking harm to reputations, integrity, and human wellbeing.

Inconsistent State Laws Aren’t Enough

The existing patchwork of state laws needs bolstering with a federal solution that provides baseline protections, offering meaningful recourse nationwide.

The No AI FRAUD Act Provides Needed Protection

The No AI Fake Replicas and Unauthorized Duplications (No AI FRAUD) Act of 2024 builds on effective elements of state and federal law to:

· Reaffirm that everyone’s likeness and voice is protected, giving individuals the right to control the use of their identifying characteristics.

· Empower individuals to enforce this right against those who facilitate, create, and spread AI frauds without their permission.

· Balance the rights against the 1st Amendment to safeguard speech and innovation.

The No AI FRAUD Act is an important and necessary step to protect our valuable and unique personal identities.

SUMMARY

No AI FRAUD Act: Protecting Individual Rights in the Age of Digital Replicas

The draft legislation titled "No Artificial Intelligence Fake Replicas and Unauthorized Duplications Act of 2024" or "No AI FRAUD Act" aims to establish individual property rights over one's likeness and voice.

It responds to the misuse of AI technology in creating unauthorized digital replicas and voice simulations.

The Act defines individual, digital depiction, and digital voice replica, emphasizing the property rights in likeness and voice as intellectual property, transferable and descendible.

It includes provisions for authorized and unauthorized use, emphasizing consent and legal repercussions for violations, and balances these rights with First Amendment protections.

Pros:

Protection of Individual Rights: The Act safeguards individuals against unauthorized use of their likeness and voice, particularly against exploitation by AI technology.

Intellectual Property Recognition: Recognizes and treats voice and likeness as intellectual property, providing clear legal standing.

Accountability Measures: Imposes legal consequences for unauthorized usage, acting as a deterrent against potential misuse.

Cons:

Potential for Overregulation: The stringent regulations might stifle technological and creative innovation in digital media.

Enforcement Challenges: The global nature of digital media may make it challenging to enforce these rights effectively.

Balancing Act with First Amendment: Navigating the intersection of these rights with First Amendment freedoms could lead to complex legal disputes.

Will the Act affect individuals and companies working legitimately with AI technology?

Possibly, the Act could impact legitimate users. Here's how:

Compliance Burden: Companies and individuals working with AI must ensure strict compliance with the Act, meaning increased legal and operational costs.

Innovation Deterrent: The Act may deter innovation in AI and digital media, as creators might fear unintentional violations.

Vague Definitions: If the Act has ambiguously defined terms, it might lead to confusion about what constitutes a violation, impacting legitimate AI activities.

These effects could limit the potential of AI in areas like digital art, entertainment, and even educational tools, where voice and likeness play a key role.