Is Using GenAI Ethical? I Asked an Alien, a Monkey, and a First Grader (for a friend)

I use AI. Then there’s GenAI Derangement Syndrome. They don’t like it and instantly cancel me, even when I do the writing. Like my first-grade teacher labeling my silence as low intelligence.

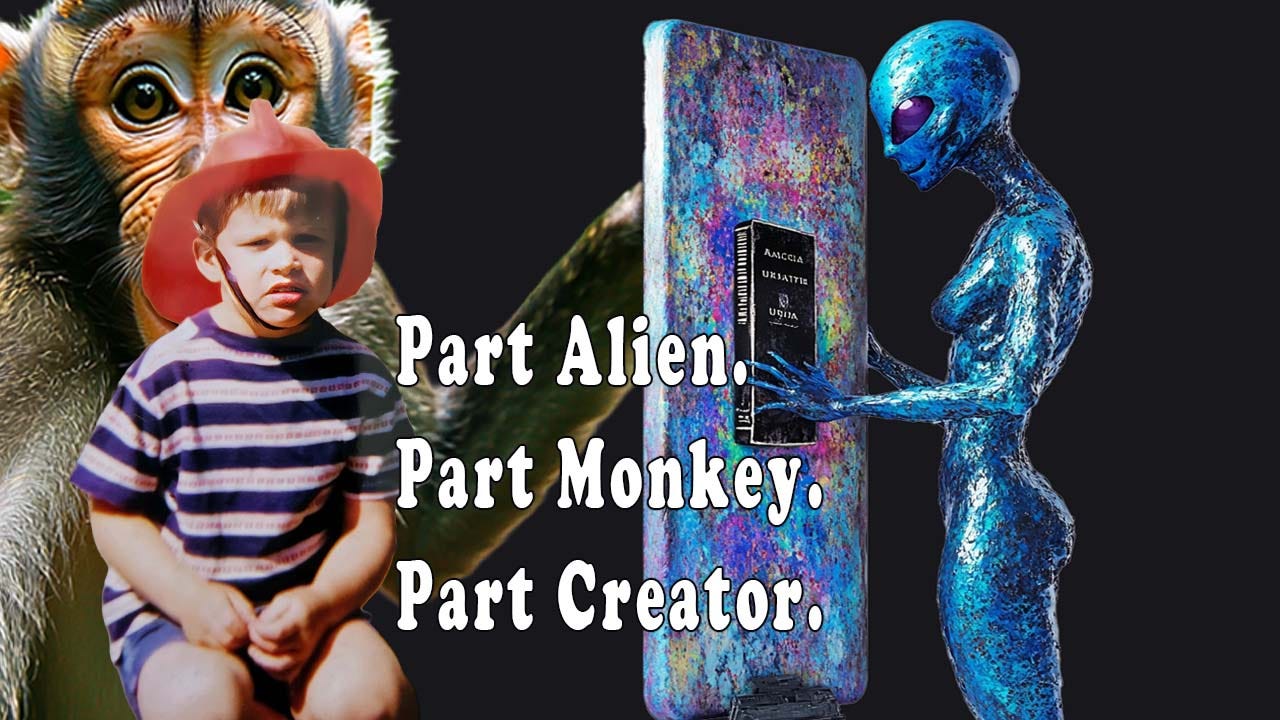

I feel like an alien using GenAI. Like a monkey trying to evolve.

I’ve felt what happens when you say AI out loud in certain, “creative” rooms. Breathing stops and judgment starts, it goes quiet. I’m wrong before I’ve got a chance to speak.

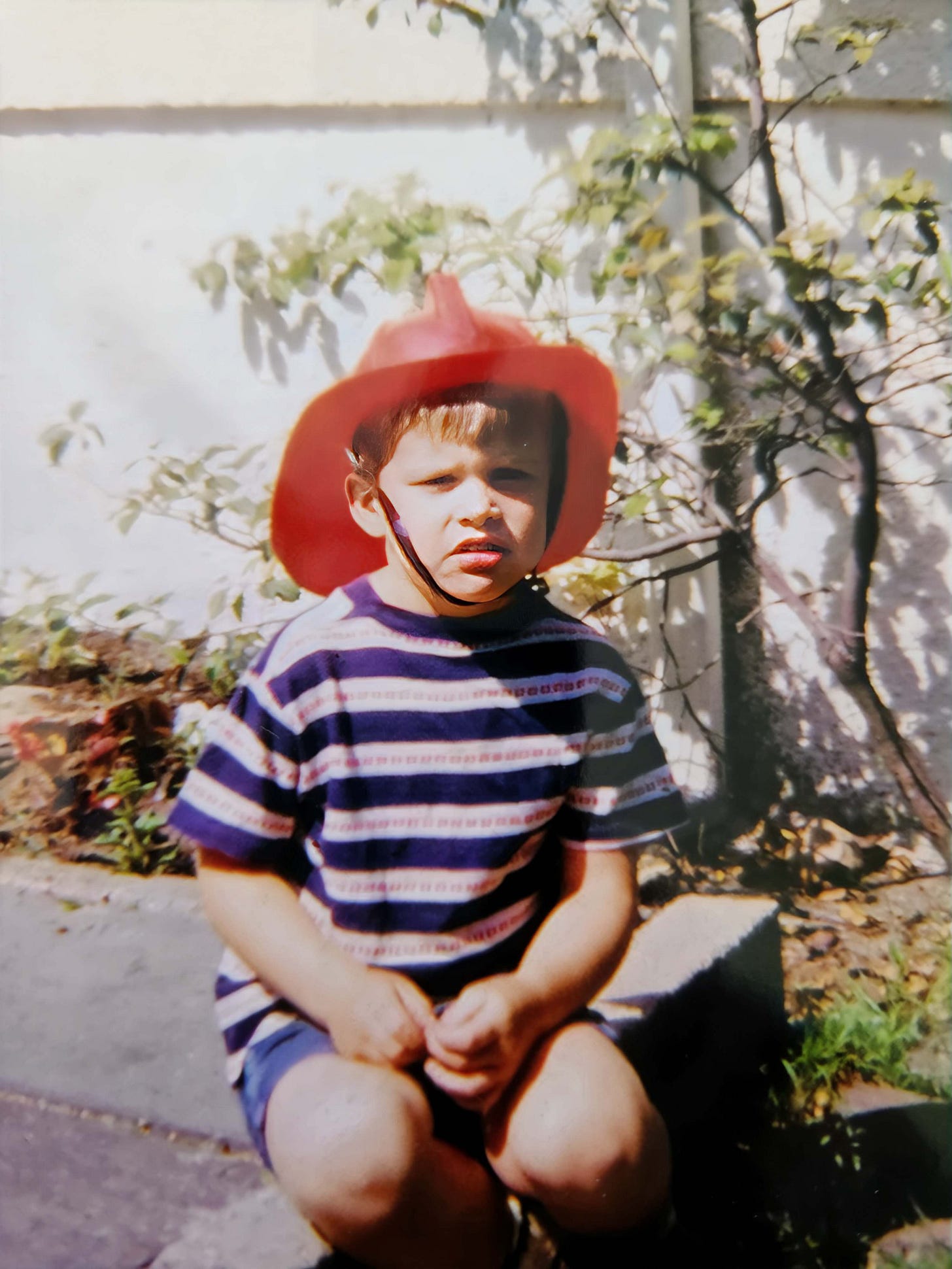

Same silence I felt moving halfway through first grade to a class of new strangers. In school they moved me to the lowest tier, the kind needing extra help to read. They looked and judged me, the same instant stereotyping that happens to AI users now.

Didn’t matter if I’d been reading books for a few years by then. My introversion was their reason to classify me as a lower intelligence. Like an outsider to their world.

When I share about GenAI positively, or at least not ripping it out as some plague on society, the silence is loud. Not from me, from terse comments and deletions. Disdain.

Same reason I shut up in first grade; I started believing the teacher was right, and kids called me dumb – ironic as that means mute - even though I wasn’t.

Instead, I got quiet and adapted into part alien, part monkey, and part creator. A little like I feel today as I keep getting asked the same gotcha question.

Is using AI ethical?

Running into GenAI Derangement Syndrome

Let’s name what’s happening, because it has a name. The person using GenAI gets your contempt, because what they do is alien. I just want to understand what primal principle that is. The ones shouting loudest online are writers, mocking those using GenAI. And writers like that rarely try it or if they do, use it to create crap.

It’s a feeling petrified into a stance. A variety of shames for any action. Using GenAI to create an image, using it to edit? Shame on you, that’s a social signal like cheating.

It’s more like AI Derangement Syndrome: the conviction that using GenAI is not just wrong but categorically wrong, end-of-creativity wrong, eugenics-level wrong. Anyone who does it forfeits the right to be taken seriously as a creator.

I’ve seen it up close. Say you use AI and watch what happens. Not always. But often enough. The unfollows. The deleted posts. The quiet removal from conversations that were fine yesterday. Move along alien.

I understand the fear underneath it. I do. Worrying that something real is being lost. That the tools are moving faster than we can. That some people will use GenAI to replace craft rather than improve it, and the flood of mediocre output will make it harder to find the work that matters.

These are real concerns deserving real conversations.

Still I wonder if this crowd would cheer for a macaque’s right to own his selfies, while treating a human using GenAI as the red line that cannot be crossed.

So yes. The monkey case is about more than a monkey. And let me start there, because Naruto deserves his moment.

Naruto and the Selfie He Wasn’t Allowed to Own

In 2011, a crested macaque named Naruto found a camera in an Indonesian wildlife reserve and did what any of us would do. He picked it up and took photos of himself. Lots of them. Some of them were good. Composed, even. The kind of selfie most humans can’t manage to do.

PETA sued on his behalf, to make a point. The argument: Naruto made the work. Naruto should own it.

The court said no. Non-human animals don’t qualify. Authorship requires a human being. After years in the court, they finally settled.

The photographer who owned the camera, who set up the conditions, who traveled? He kept 75% of the revenues. Agreed to donate the rest toward protecting Naruto’s habitat. The humans sorted it out. Naruto went back to being a macaque. He never left the jungle.

Nobody called the photographer unethical for profiting from a photo he didn’t technically take. No one blamed Naruto for a rogue selfie. The story was funny. Everyone moved on and found a compromise.

Now here’s where it gets strange.

The Alien Intelligence Who Wrote a Book

Starting in 1911, a group of people in Chicago began receiving messages from what they described as celestial beings. Superhuman intelligences. Non-human minds transmitting thousands of pages of cosmological and spiritual teaching through a person who received the transmissions, processed and shaped them into something readable.

This became the Urantia Book. 2,097 pages. Still studied over 100 years later. The origin story is exactly as unusual as it sounds.

In 1955, the Urantia Foundation applied for copyright. The question the courts had to answer over the next 40 years: can work authored by non-human intelligence be protected? Who owns the output of beings that may or may not exist?

Yes, the courts said. It can be protected. Not by the celestial beings. Well, except for one judge in the early 1980s who temporarily found in favor of these beings, because both sides of the lawsuit agreed that celestial intelligence created the book.

The one who received the non-human intelligence and translated it into something the world could use?

He’s the human in the middle, who ended up owning it. I think about first-grade me with that book. Taking in something external, making it mine. Not so different. What’s a book without a reader?

Celestial intelligence made the Urantia Book. Everyone found this fascinating. No one called it plagiarism. No one questioned the ethics of working with a non-human mind. The book has sold millions of copies over the years.

In 2023, a woman named Kristina Kashtanova wrote a graphic novel, Zarya of the Dawn. She wrote the story herself. She used AI to generate images, selected the ones that worked, arranged them, and built the book. The Copyright Office gave her protection for the text, the selection, the book.

The AI-generated images inside? Unprotectable. Not enough human authorship in the generation of each one.

What’s less defensible is which one gets treated as plagiarism by some. As a moral failure.

What We Were Taught About the Ethics of Intelligence

I was raised, in the US, on a specific idea of intelligence. Read. Write. Memorize. The person with the most stored in their head won. That was the model. The test was: can you reproduce what you’ve accumulated? Can you write the essay without looking anything up? Can you show your work using only what’s already inside you, RIGHT NOW?

When I moved in the middle of first grade, shy and introverted, my new cutting-edge grade school in Massachusetts saw me and put me in the lowest of 3 tiers of classes based on intelligence. And I’d been reading books since I was 5 years old.

Luckily my mother’s diligence and care made them reconsider me. I’ll never forget the man walking into the room and asking for me, but thought it was another boy in the class. He gave him the book and the boy wasn’t yet able to read. He gave it to me and recognized I knew how to read. No one asked me before.

But without my mother’s urging, I’d have been stuck in that class. Because the measure of intelligence in those early days was extroversion. Now it’s being human. While we don’t know if intelligent life is out in space yet, we do know there are many different intelligences on this planet.

Dolphins show advanced social complexity, self-awareness, and communication skills. Elephants also show self-awareness, with long-term memory and emotional depth. AI, in its way, is another intelligence we don’t yet understand. Maybe the 20th century book centric way is opening up learning to listening, watching, and experiencing.

I love books. I’m not arguing against reading. But I’m old enough to remember when that model was the only model. A lot of people it was supposedly serving weren’t served by it.

Writing is hard. Reading is hard. A lot of people went through twelve years of training designed to build those skills and came out the other side unable to use them. Then they have been made to feel less intelligent because of it.

And now when they look for help from GenAI, we want to protect that system.

Because AI threatens the altar. But the altar was never human intelligence.

I know that the generation coming up is learning differently, thinking differently, building differently. And they’re not wrong for using GenAI. What looks like laziness from critical eyes might just be a different relationship to where the learning and thinking happens.

Memorization and writing aren’t the only measures of intelligence. Nor was my quiet introversion in first grade. Or wary silence in discussions filled with angry creatives ready to troll ones who talk about the possibilities of AI along with the problems.

The AI ethics crowd, in my experience, is often defending a particular aesthetic of effort. The visible struggle. The human hand-written draft. The thing that looks like intelligence looked when we were in school. The good old days, like any aging generation, or idea of creativity.

That’s not ethics.

That’s nostalgia with a mission statement to go backwards.

If you’d cheer for Naruto, and find the Urantia Book worth reading, what changes when the non-human intelligence has a server address?

I’m asking for a friend.

I'm a published novelist, very interested in understanding, experimenting with, and using AI for a wide variety of research and writing tasks. I don't make any secrets about the ways I use it for my posts, but I don't write about my experiments with various models across a couple of platforms because of the strong anti-AI sentiment in the writing community, fueled both by the real (and sad) misuse of these tools and the uninformed fear that's widespread out there. I appreciate and applaud what you're doing here. Thank you.