We Don't Trust AI Rats, But We Trust AI Judges

A rat with giant balls made it through peer review. Nobody stopped it. The research was solid and cancelled. Now the same AI we don't trust decides who's cheating, making you prove you're human.

Gazing into the deep brown eyes of Shelley, my breath slows and my heart calms. German Shepherds do that for me, I post way too much about her.

Suddenly, a German Shepherd video pops in my feed; I watch, of course. The video ends, and there’s 2 more. Next day, more, and the next day, more and more, until all I’m seeing are German Shepherds.

Training vids, playing vids, weird little dog dressed as human vids: I’ve never seen so many new dog ads.

My breath speeds up, that little gurgle in my stomach feels like eating a bad shrimp. Something’s watching me, maybe listening to me through my phone, I don’t know what.

Makes me nervous to like or comment on something, because I’ll get more of things I don’t really want. Faster.

Maybe I should just trust the science of what’s going on and ignore what’s happening. But that’s like turning your eyes away from a rat with giant balls.

It probably needs a wheelbarrow to walk down the street.

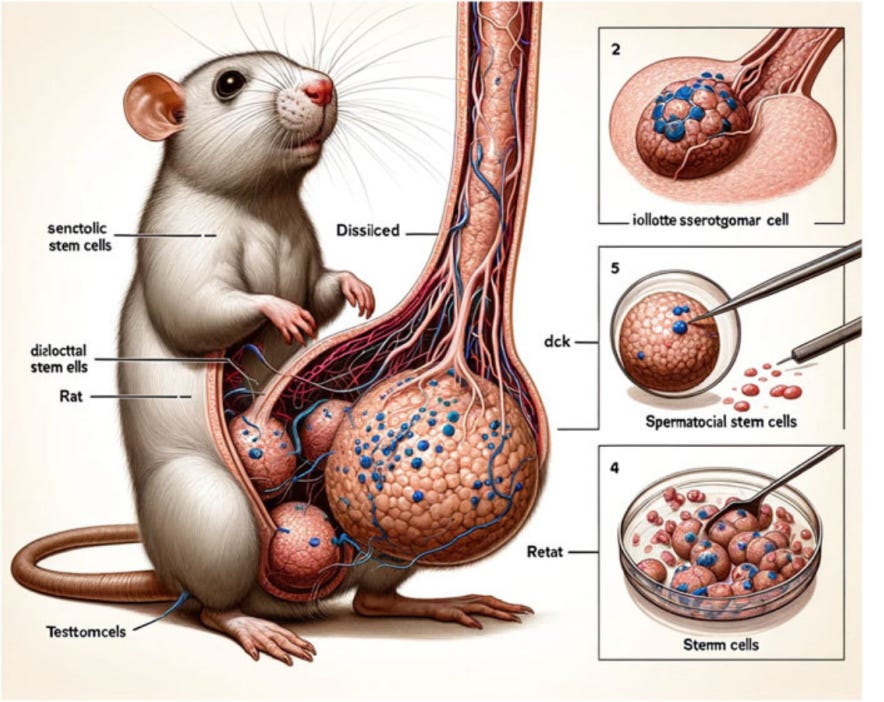

Curious, I dove into the link to find out more. I don’t know much about scientific publishing, but I do know AI images, and this is clearly one. Imagine how bad the research is?

I found the rat in the paper, “Cellular functions of spermatogonial stem cells in relation to JAK/STAT signaling pathway.” I’d translate that for you, but I can’t, though clearly testicles are involved.

Seeing that on an X post gave me ju-deva – seeing something I wished I’d never seen before. I mean, they’re twice the size of their head parts. It probably needs a wheelbarrow to walk down the street.

Surprised to find this in the Frontiers journal, a science publisher who claims to be “safeguarding peer review to ensure quality at scale”. This study went through an editor in India, then reviewers in the US and India approved. OK, and no one noticed the massive growth dominating the image like elephantitis.

Good to find somebody noticing the words on the rat’s image had misspellings and nonsense phrases, like Midjourney gibberish. The X post went viral, scientists and armchair commenters knowing nothing about science, all attacking the study.

The researchers didn’t hide their AI usage for the rat drawings.

The US reviewer, when asked about the rat, said it was Frontier’s issue, not his job.

Frontiers retracted it with a disclaimer saying; we’re just the publisher. The problem lies with the person publishing.

No one accepts responsibility. Meanwhile, do you trust scientific research that comes with humongous rat testicles created by AI?

“Trust the science” is blurted out by people who aren’t scientists. People on social are looking for a little anger, stoking their tribe’s anger to get seen. AI is the bad label, then it’s all crap.

I’m wondering what happens to these people, and their research when the AI slap happens. Especially in academic circles where science research lives, did this study hurt the researchers who created it, because of a viral social screamfest?

So I went to Jason Rodgers, who now is a Forensic Officer with the Scotland police.

I asked him what he saw as the biggest challenge in detecting fake-generated scientific content:

“I believe the biggest challenge with AI-generated science content is going to be the risk of false positives…

Recently they’ve also started bringing in automated AI checkers to see if there’s any AI-generated content in there. I’ve seen online people, complaining or claiming… their submissions, which are 100% original content company, are being flagged as having a high content of AI-generated media. That’s been either dismissed or flagged for additional review, or they’re being penalized for it in some way.

I don’t know whether their claims are true or not, because I don’t know the people and I haven’t seen their submissions… I think my biggest worry is false positives.”

My mind was still so wrapped around the rat balls image; I didn’t think about what that judgment does to researchers. We don’t trust anything with AI attached to it.

But we use AI detection tools, famous for not being that accurate, to do the work. And the judgement of AI-generated media is trusted by universities and publications - to protect them against the stigma of using AI.

We can’t trust if something is real, or fake. While the rat image was fake, the actual research was solid, and retracted. Cancelled by its use of AI.

Those in the firing line of AI detection must prove they wrote their work. The problem is every paper that looks right, reads right, passes every check — and nobody reads it. Not even the ones punishing you for using AI, because they can’t tell the difference.

Neither can the bleary-eyed student rubbing sleep out of their eyes, afraid they are better at science than writing. Knowing their average skills might trigger AI detection tools. The tools aren’t accurate; that’s a coin flip. We assume it does the job better than a human would.

And I can’t trust if Shelley’s real, or AI.

When I go to Facebook, I don’t click on any German Shepherd videos, and they go away.

When a reviewer sees a rat with a giant penis, slow down and fix the rat’s balls.

We don’t trust AI, but we trust AI to punish.